The Future of Tech: Unraveling the Mysteries of Brain-Computer Interfaces

David K.

Brain-computer interfaces sound like sci-fi—until you realize we’re already here. Not “telepathy chips for everyone” here, but real systems that can pick up brain signals and turn them into actions on a screen.

If you’ve ever wondered how close we actually are to controlling technology with our thoughts, this article breaks it down in plain English: what BCIs are, what they can do right now, and what still has to happen before they become mainstream.

What Brain-Computer Interfaces Really Are

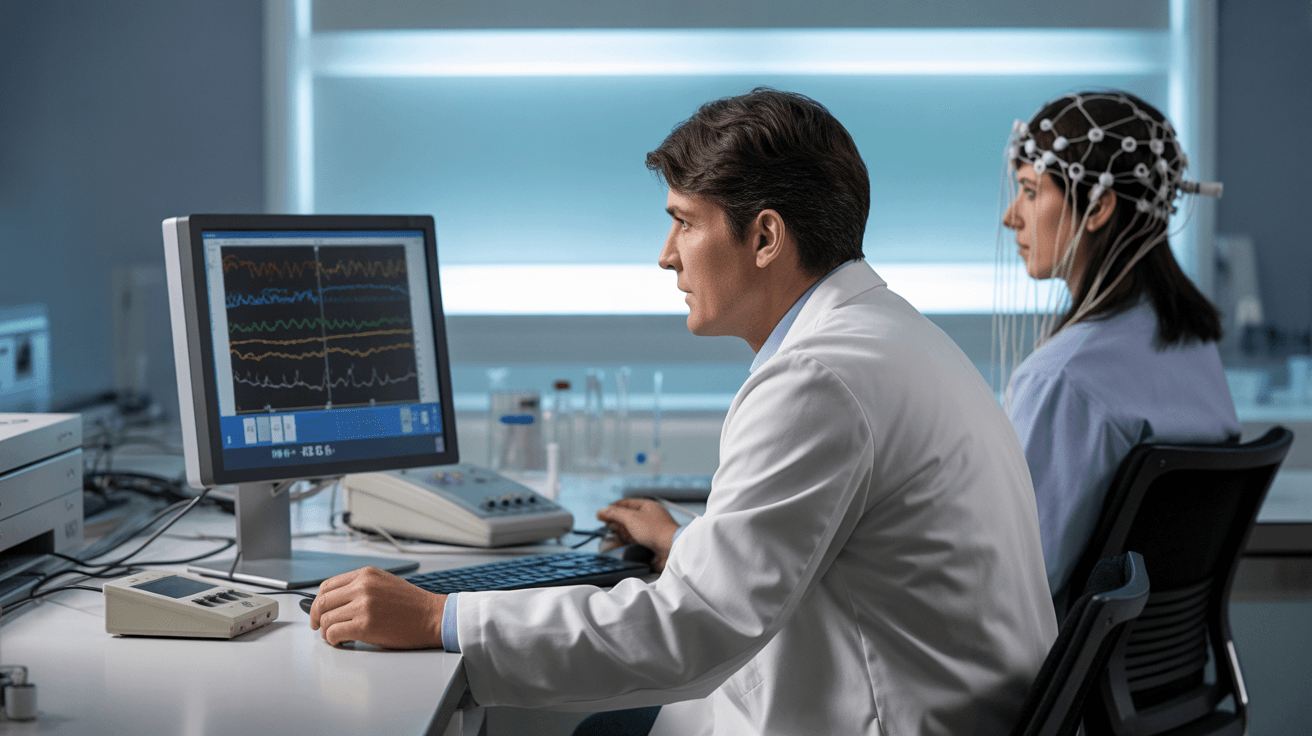

A brain-computer interface (BCI) is a system that reads signals from the brain and translates them into commands for a device. Think of it like an input method—similar to a mouse or keyboard—except the “input” comes from neural activity.

The cleanest way to understand it is this: your brain produces electrical signals, those signals can be measured, and software can learn patterns that match intent. The results range from basic cursor movement to more advanced communication systems.

If you want a reliable medical definition of BCIs and how they’re used in real settings, the Mayo Clinic’s overview of brain stimulation and related neurotech is a useful starting point for the broader context.

Key insight:

The “magic” in BCIs isn’t the hardware alone. It’s the combination of signal detection + pattern recognition + training over time that makes intention measurable.

How BCIs Work in Plain Language

BCI systems typically follow the same pipeline, whether it’s a non-invasive headset or a surgically implanted device:

- Capture: sensors measure brain activity (electrical signals)

- Clean up: software filters noise and isolates useful patterns

- Decode: machine learning models translate patterns into commands

- Output: a device responds (cursor moves, letters appear, robotic arm moves, etc.)

The part most people miss is how much “noise” exists in the real world. Even tiny muscle movement, eye blinks, or background electrical interference can mess with signal accuracy.

Two Main Types of BCIs

Not all BCIs are built the same way. The biggest difference is whether the sensors sit outside the skull or inside the brain.

If you want to go deeper into the research side (without drowning in random blog takes), PubMed’s BCI research index is one of the cleanest places to browse real studies and clinical papers.

What BCIs Can Do Today

This is where things get interesting—because the “real” version of BCIs is already changing lives, even if it’s not consumer-friendly yet.

Right now, BCIs are most valuable in scenarios where traditional input is impossible or extremely limited. For example:

- helping people communicate after severe paralysis

- assisting with movement and motor control research

- enabling hands-free device interaction in controlled settings

- supporting rehabilitation (especially when paired with other therapies)

What’s Holding BCIs Back

BCIs are impressive, but they’re nowhere near plug-and-play for everyday people. The obstacles are a mix of physics, biology, engineering, and ethics.

- Signal quality: brain signals are small and noisy

- Training time: many systems require calibration and learning

- Comfort: headsets can be awkward, implants are invasive

- Cost: hardware, clinical support, and development are expensive

- Safety & regulation: especially for anything implanted

- Privacy: brain data is not “normal” personal data—it’s deeply sensitive

Practical warning:

If BCIs ever go mainstream, privacy won’t be a “settings toggle.” It’ll be one of the core battles—because neural data can reveal patterns about attention, fatigue, stress, and intent in ways normal trackers can’t.

Where Brain-Computer Interfaces Are Headed Next

BCIs won’t replace your phone in the next few years. What we’ll see first is a slow rollout of specialized use cases—where the value is high enough to justify the complexity.

In my experience watching how new platforms mature, the adoption curve usually looks like this:

- medical use cases lead (because the benefit is life-changing)

- research and labs expand capabilities

- enterprise pilots appear (high-cost environments where gains matter)

- consumer versions follow once cost + comfort improve

What This Means for Regular People and Businesses

If you’re not planning to implant anything in your brain (same), the main takeaway is still useful: BCIs are pushing forward a future where “input” goes beyond touchscreens and keyboards.

For businesses, the smarter move right now isn’t to chase headlines—it’s to watch the enabling tech around BCIs:

- signal processing

- real-time machine learning

- wearable sensor design

- privacy-first data handling

That’s where the commercial opportunities usually show up first.

FAQ

What is a brain-computer interface (BCI)?

A BCI is a system that reads brain activity and translates it into commands for a device, allowing communication or control without traditional inputs like a keyboard or mouse.

Do BCIs require surgery?

Not always. Non-invasive BCIs use sensors on the scalp. Invasive BCIs involve implanted electrodes and typically offer higher accuracy, but with higher risk.

Can BCIs read thoughts?

Not in the way movies show it. BCIs decode patterns linked to specific trained actions or intentions (like moving a cursor), not full private thoughts or memories.

What are BCIs used for today?

They’re most commonly used in medical and research settings—especially to help people communicate or interact with devices when movement is severely limited.

Will BCIs become mainstream consumer tech?

Eventually, maybe—but it’ll likely take years. The biggest barriers are signal quality, comfort, cost, and privacy regulation.

Key Takeaways

- ✓ Brain-computer interfaces translate neural signals into device commands.

- ✓ Non-invasive BCIs are safer, but invasive systems can be far more precise.

- ✓ The most impactful BCI use cases today are medical and assistive communication.

- ✓ BCI performance depends heavily on signal noise, training, and software decoding.

- ✓ Privacy and ethics will be major deciding factors as neural data becomes more usable.

- ✓ The near-term future is specialized adoption first, consumer tech later.

Tech

Tech Ryan Reynolds’ Tech Ventures Are Expanding Beyond Hollywood

Written by: David K. Tech Strategy Consultant & Workflow Automation Specialist ...

Fashion

Fashion Sustainable Fashion and the Rise of Eco-friendly Brands

Written by: Jasmine L. Fashion Blogger & Pop-Culture Trend Watcher I write about...